The modern digital landscape is fraught with complexities, especially with the rapid advancement of artificial intelligence (AI) technology. One notable incident that exemplifies the dangers of AI misinterpretation and the dissemination of misinformation occurred recently involving journalist Ben Black and his April Fools’ story. This feature delves into Mr. Black’s experiences, highlighting how his prank inadvertently became a source of misinformation circulated by AI tools.

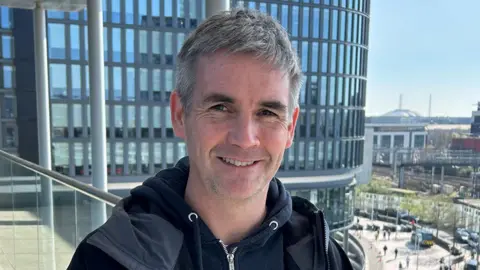

Every year, Ben Black, a journalist, engages in the playful tradition of creating fake news stories for April Fools’ Day, published on his community news site Cwmbran Life. Over the years, his whimsical tales have ranged from imaginative ideas like a Hollywood-style sign on a mountain to the outrageous conception of a nudist cold-water swimming club located at a nearby lake. His stories, often humorously exaggerated, are meant to entertain the community and create a light-hearted atmosphere.

In 2020, Mr. Black’s April Fools’ article claimed that Cwmbran had been recognized by Guinness World Records for having the most roundabouts per square kilometer. He concocted various facts, including inventing a fictitious number and even fabricating quotes from local residents to lend credibility to his tale. Initially, Black’s antics were well-received, prompting laughter and amusement among his readers.

However, the light-hearted fun took a concerning turn when, despite adjustments made within the same day to clarify the story’s fictional nature, Black found his article being utilized by Google’s AI as a legitimate piece of information. When searching for his previous stories on April 1st, he was astonished to see Google’s AI tool presenting his fabricated claims as factual content. This unexpected revelation not only alarmed him but also raised critical questions about the integrity of AI systems and their ability to discern genuine news from satirical content.

The implications of this incident extend far beyond personal embarrassment for Black; it exposes a significant flaw in AI-powered information dissemination. As Black described, it is unsettling that someone searching for trustworthy information about road infrastructure in Wales could come across a blatant fabrication presented as fact. While his story may not have posed a grave danger, it stands as a stark reminder of how misinformation can propagate through credible platforms, ultimately misleading the public.

Ben Black’s experience has ignited concerns regarding the role of AI in journalism and content creation. He noted that many independent publishers face substantial challenges in an environment where larger news organizations have established partnerships with AI enterprises, allowing them to leverage original content. Black expressed his frustrations, highlighting the disparity: while prominent media companies may benefit from these collaborations, smaller independent voices are left to struggle for acknowledgment and viability in an increasingly competitive marketplace.

Although Black opted not to publish a fake story for April Fools’ Day this year, citing time constraints, the disheartening experience has influenced his decision to refrain from continuing this amusing tradition moving forward. He reflected on how AI tools, which were originally intended to enhance user experience, have instead become a means for others to exploit original content without consent, further jeopardizing the livelihoods of independent journalists and publishers.

In conclusion, Ben Black’s encounter with Google’s AI highlights a growing dilemma in the digital information age: the blurred lines between factual reporting and fabricated stories, particularly in an era where AI tools increasingly influence the way news is consumed. This incident serves as a cautionary tale, urging us to scrutinize the sources of information we engage with and consider the potential ramifications of AI technology on the integrity of journalism.